Edge Enhanced Direct Visual Odometry

Xin Wang, Wei Dong, Mingcai Zhou, Renju Li, Hongbin Zha

BMVC 2016

Abstract

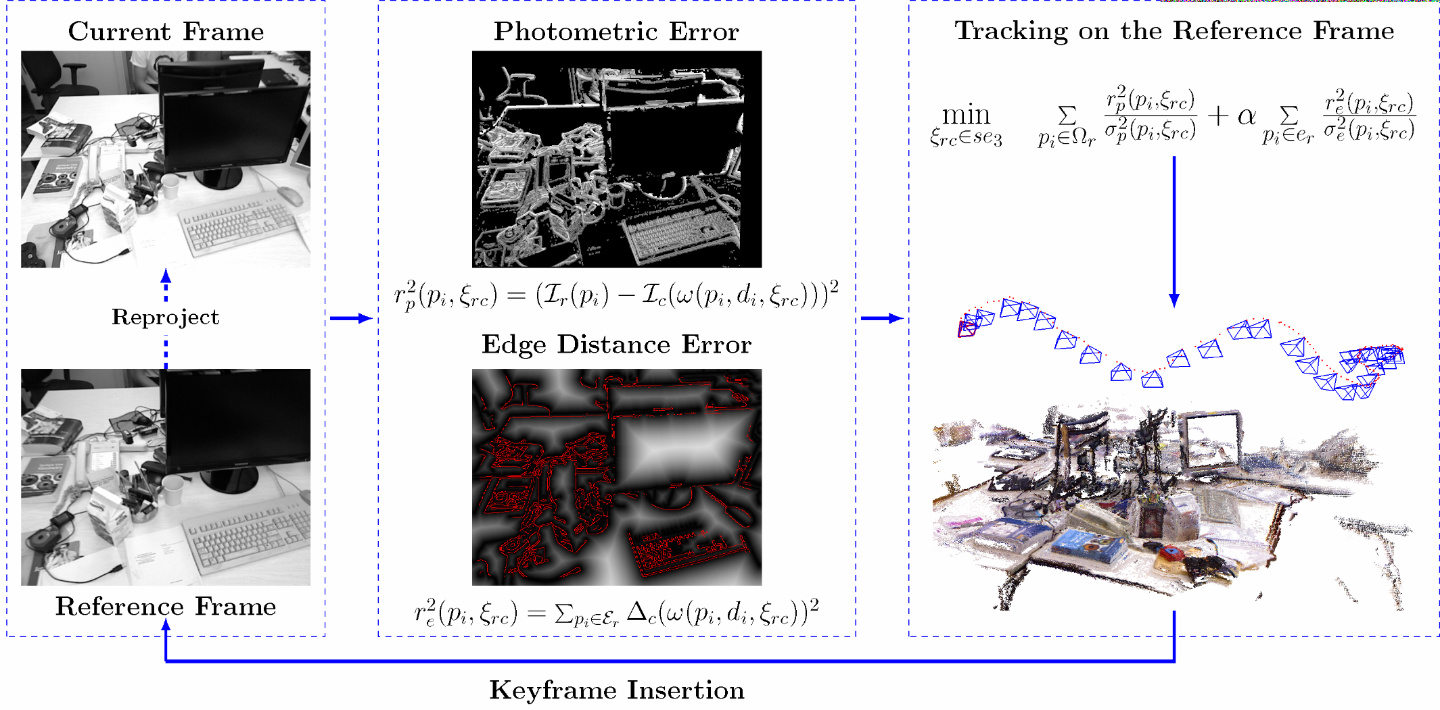

We propose an RGB-D visual odometry method that both minimizes the photometric error and aligns the edges between frames. The combination of the direct photometric information and the edge features leads to higher tracking accuracy and allows the approach to deal with challenging texture-less scenes.

In contrast to traditional line feature based methods, we use all edges rather than only line segments, avoiding aperture problem and the uncertainty of endpoints. Instead of explicitly matching edge features, we design a dense representation of edges to align them, bridging the direct methods and the feature-based methods in tracking. Image alignment and feature matching are performed in a general framework, where not only pixels but also salient visual landmarks are aligned.

Evaluations on real-world benchmark datasets show that our method achieves competitive results in indoor scenes, especially in texture-less scenes where it outperforms the state-of-the-art algorithms.

Citation

@inproceedings{wang2016edge,

title={Edge Enhanced Direct Visual Odometry.},

author={Wang, Xin and Dong, Wei and Zhou, Mingcai and Li, Renju and Zha, Hongbin},

booktitle={BMVC},

year={2016}

}